RESEARCH TRIANGLE PARK, NC, Feb 25, 2025 – Lenovo announced three new infrastructure solutions powered by Intel Xeon 6 processors designed to modernize and elevate data centers of any size to AI-enabled powerhouses. The solutions include next-generation Lenovo ThinkSystem V4 servers that deliver advanced-level performance and unique versatility to handle any workload while enabling AI capabilities in compact, high-density designs. Lenovo provides appropriate solutions, whether using edge computing, co-locating, or adopting a hybrid cloud. These options efficiently enable intelligence and bring AI wherever it is needed.

The new Lenovo ThinkSystem servers are purpose-built to run the widest range of workloads, including the most compute-intensive – from algorithmic trading to web serving, astrophysics to email, and CRM to CAE. Organizations can streamline management and boost productivity with the new systems, achieving up to 6.1x higher compute performance than previous generation CPUs1 with Intel Xeon 6 with P-cores and up to 2x the memory bandwidth2 when using new MRDIMM technology to scale and accelerate AI everywhere.

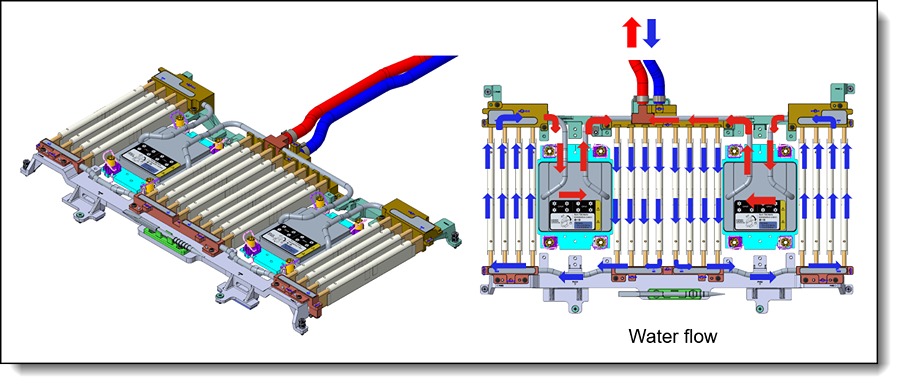

The systems are designed to address critical power limitations while enhancing the performance needed for demanding AI tasks. With ongoing improvements in Lenovo Neptune liquid cooling innovation, Lenovo is creating an effective approach to data center design that reshapes how power is utilized in IT. Lenovo Neptune water cooling boosts thermal efficiency by 3.5x, reducing power consumption used for cooling IT equipment while increasing its processing power 3. With increased density and efficiency, the new Lenovo ThinkSystem V4 servers with Intel Xeon 6 with E-Cores enable up to 3:1 rack consolidation of 5-year-old infrastructure, freeing up space and power for new AI projects4.

“Lenovo is reimagining what’s possible in the data center by delivering intelligent and versatile infrastructure solutions that simplify and accelerate IT modernization,” said Scott Tease, vice president of Lenovo Infrastructure Solutions Group, products. “The new Lenovo ThinkSystem V4 servers represent the next generation of performance and innovation, achieving higher compute with less energy consumption and delivering AI-powered management that empowers businesses with fast and protected AI deployment across any environment. With Intel, we’re enabling our customers to scale smarter and evolve faster to achieve AI-powered transformation.”

Flexibility and Performance without Compromise

Lenovo ThinkSystem V4 servers equipped with Intel Xeon 6 processors and P-cores offer superior performance and productivity. They are designed to handle demanding AI challenges across various compute-intensive tasks. The servers are built for reliability and can adapt to different settings, including colocation services. This is essential for organizations needing high-performance private AI without the necessary data center space or liquid cooling infrastructure. Enhanced security features safeguard data effectively, regardless of its location. A new locking bezel option secures physical assets in remote environments.

The new Lenovo ThinkSystem V4 servers include:

- SR630 V4: data center powerhouse that is high-density, space-efficient, and designed to optimize performance in compact environments while delivering unique computing power and scalability for demanding workloads. The super dense 1U system is ideal for cloud service providers (CSPs), telcos and fintech operations, enabling them to manage real-time transactions requiring low latency and high throughout performance with limited floor space.

- SR650 V4: delivers versatility for any workload with up to 25% more software GPU capacity for up to 2x computation performance in a compact 2U form factor5. It is ideal for engineering, modeling & simulation, and AI with rapid time to value at up to 50% cost savings for GPU-intensive workloads.

- SR650a V4: purpose-built to deliver AI power in a dense package that enables GPU-intensive workloads like machine learning, virtual desktop infrastructure (VDI), and media analytics. The 2U2S platform supports up to four double-wide GPUs with front GPU access, ensuring unmatched performance and ease of maintenance without compromising memory capacity. This server is ideal for organizations looking to drive AI innovation in a dense, efficient form factor.

The Lenovo ThinkSystem V4 solutions also extend Lenovo Neptune liquid cooling from the CPU to the memory with the new Neptune Core Compute Complex Module, supporting faster workloads with reduced fan speeds, quieter operation, and lower power consumption. Fans can consume as much as 18% of the power used by servers. The new Neptune module is precisely engineered to reduce air flow requirements, yielding lower fan speeds and power consumption while keeping the parts cooler for improved system health and lifespan. The module also expands cooling to four SW GPUs in the new ThinkSystem SR650a.

AI-Powered Management for Smarter AI Everywhere

Businesses can deploy complex AI applications with integrated Lenovo systems management that saves time and resources across the ThinkSystem V4 portfolio. As a central command, XClarity One provides an insightful user interface that ensures fast, efficient, and protected deployment from one system to a thousand. New integrated enterprise remote control enables remote access and management of enterprise infrastructure no matter where it exists. Additionally, new AI-powered analytics offer server SSD predictive failure analysis (PFA) on XClarity One, helping to eliminate downtime by identifying potential problems with DIMMs before they fail. Finally, XClarity One now offers a Federated Directory that centrally manages system access across multiple applications through a unified registry and account.

Lenovo continues to redefine innovation with adaptable and responsible AI solutions that make AI accessible to everyone. Their technology, from edge computing to data centers, helps organizations discover new opportunities, enhance operations, and keep pace in an AI-driven world.

1 Up to 6.1x higher performance for compute-intensive workloads such as HPC, AI and database vs. 2nd Gen Intel Xeon CPUs. See 9G10, 9H10, 9A210 ATat intel.com/processor claims: Intel Xeon 6. Results may vary.

2 Comparing the SR650a V4 to the SR650 V4, The SR650a V4 with 4x H100 GPUs vs the Sr650 V4 with 2x H100 GPUs provides 2x AI Computation performance.

3 Lenovo Internal data ESG DECK

4 See [7T1] at intel.com/processorclaims: Intel Xeon 6. Results may vary.

5 Lenovo Internal data SR650 v4 based on internal benchmark testing.

Source: Lenovo

About Lenovo

Lenovo Group Limited, founded in 1984, is a multinational tech company specializing in designing, manufacturing, and marketing consumer electronics, personal computers, software, servers, and related services. Serving industries such as education, healthcare, retail, and manufacturing, Lenovo offers a diverse product portfolio that includes laptops, desktops, tablets, smartphones, workstations, servers, storage devices, and accessories. The company markets its products under renowned brands like ThinkPad, ThinkBook, IdeaPad, Yoga, Motorola, and Legion. Headquartered in Beijing, China, and Morrisville, NC, Lenovo operates in over 60 countries and sells its products in approximately 160 countries worldwide. In the fiscal year ending March 2023, Lenovo reported revenues of $61.9 billion, maintaining its position as a global leader in the personal computer market with a 24.4% market share.